Inventory / $@#%*!!!

David Kaufer and the origins of Eudora’s pepper system

Jeffrey Kastner and David Kaufer

“Inventory” is a column that examines or presents a list, catalogue, or register.

Current industry statistics suggest that more than 30 billion emails are written each day around the world. Judging by the fat mess that is Cabinet’s inbox, it would appear that roughly half of these are cc’d to us. Given this dizzying volume, we have developed a special relationship with our email program, Eudora. Originally developed as freeware by University of Illinois computer researcher Steve Dorner—who named it after the author Eudora Welty in honor of her short story, “Why I Live at the P.O.”—the program was acquired in 1991 by Qualcomm and became an industry leader by the mid-1990s. Though its position has eroded in the last decade with the rise of now-dominant programs like Microsoft’s Outlook, Eudora continues to serve a small but faithful segment of the email market, with Qualcomm estimating between 12 and 15 million users in 2003.

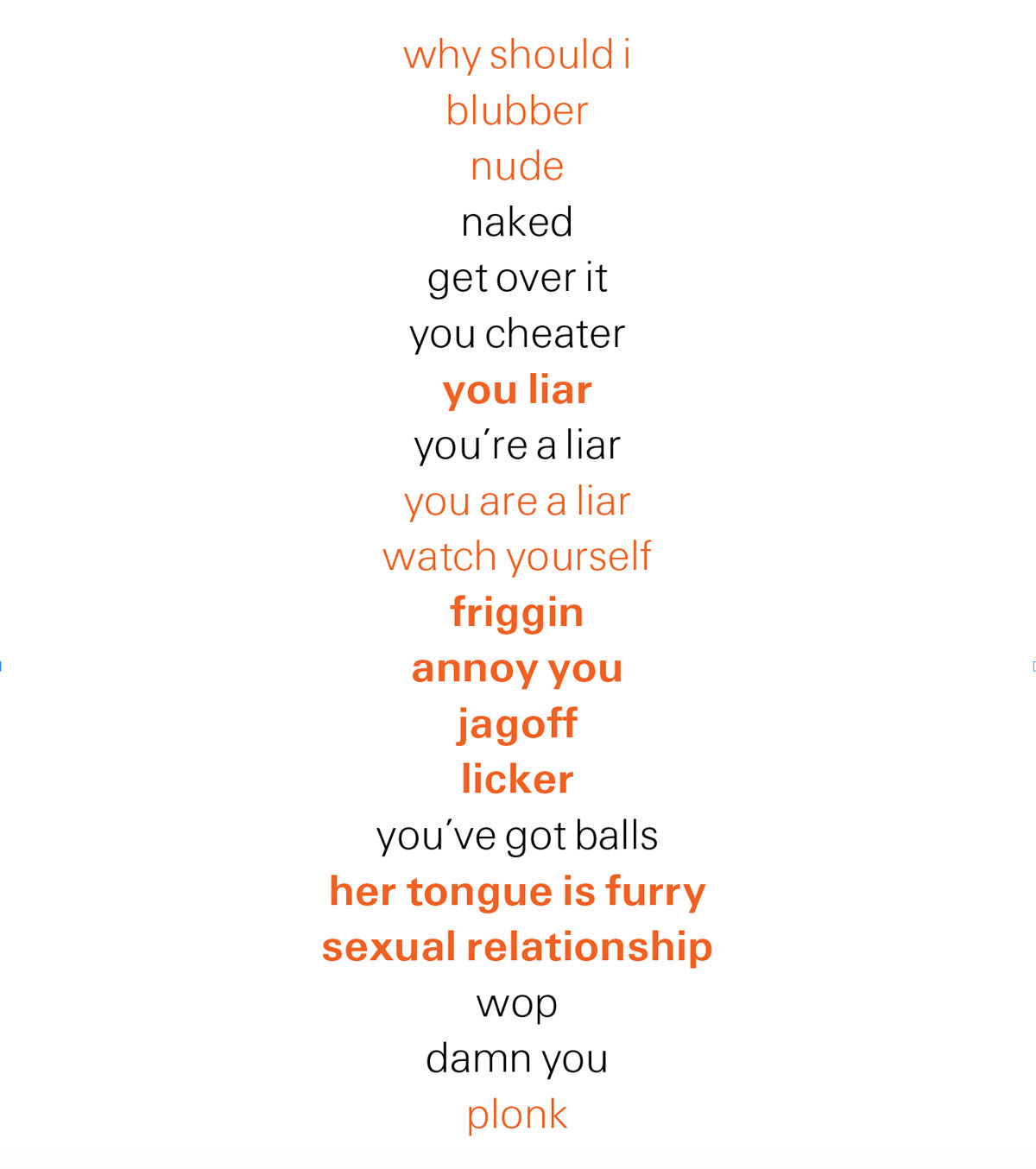

Despite the loss of market share, Qualcomm has continued to add new features to Eudora, and our favorite of these bells and whistles is a little icon containing one, two, or three red chili peppers that appears next to messages containing what, to the program’s mind, are potentially offensive words or phrases. Of course, the program doesn’t really have a “mind.” What it does have is a series of algorithms that scan the email text for certain patterns it’s been programmed to detect. How these algorithms actually function always puzzled us, even more so after we began compiling a list of words and phrases that triggered various pepper ratings. For instance, Eudora berated us to the tune of three peppers for “The doctor examined his tongue,” while giving us a pass on “You’re a terrible liar and a cheat and a disgrace to the human race.”

To unravel the Mystery of the Peppers, we turned first to Qualcomm which, while helpful, refused to divulge their proprietary list of “hot” words. They did, however, inform us that the original algorithms were developed in part based on the work of David Kaufer, head of the English department at Carnegie Mellon University in Pittsburgh, whose 2000 report, Flaming: A White Paper, examined vulgarity and rudeness in electronic communication. This installment of “Inventory” features a conversation with Kaufer about his research into linguistic patterns and the development of the pepper system, as well as a list of some of the words and phrases in our emails (sent and received!) that have been slapped with little chilies by Eudora over the last few years.

Cabinet: Tell me about the project you did for Eudora to help develop the pepper system.

David Kaufer: I did it as a summer job. My research is really into language pragmatics and writing education. I’m very interested in the small runs of language by which writers create impressions for readers, and this is not taught in normal English education because the dominant focus of textbooks is generally learning a word at a time or a sentence at a time. But where the rubber hits the road is often in these strings of one to four or five words and we memorize these patterns from reading.

I think you called these “phrasal types” in your paper.

That’s right. So a mature writer is someone who has built up over years these clumps of language that go together. And there’s some evidence that we actually create language in these bursts or patterns that we memorize. My actual research is in improving literacy, not regulating emails. But because part of my work involved collecting these “strings,” they asked me if I could write a flame detector, really as sort of a novelty item—a “cute” feature is the word they used—for Eudora.

One of the things we find intriguing about it is that it has a kind of essential subjectivity to it; it’s not entirely clear what sorts of words or phrases are going to produce what sorts of pepper responses. And so this subjectivity must reflect in some sense your human presence in this equation.

I served as a consultant to write the seed dictionary, and as far as I know they’ve continued to augment it as they see fit over the years. I haven’t really touched it since I did the original research. My paper was designed simply to lay bare my procedure—it wasn’t sophisticated, it was simply to say there’s no magic to this. When this first came up, a lot of people said, “Why is an English professor doing this? Why not a computer scientist?” But one of the big differences between me and people who work with computers is that computer scientists tend to think of language as a virus, because it’s so irregular, and they use mathematics to give it order. This makes sense for many of the applications they use, but it’s less successful when one is working on an application that crosses language and culture. One of the things you realize when you live in an English department or are a professional writer or editor, is how culturally based language is. And nothing is more culturally rooted than flaming. So it’s impossible to disentangle the language from the culture.

It necessarily had to have a kind of actual readerly input to frame it.

Exactly. What we call a flame is deeply culturally embedded.

Tell me about the research process.

First of all, on ethical grounds, you’re not going to get access to other people’s flames. Also, the flames that do the most psychological damage would probably not even be recognized by third parties, because there is so much history and context there. So I went to alt.flame, where they actually perform flaming as a public art. I have a tool that I use that allows me to read 400–500 emails into a program and then I can start looking for patterns and coding up those patterns in a dictionary. And then after I have one pass of a dictionary, I can take that and turn it on a new set of 400 emails, and let the dictionary match against the language.

So the more sorting you do, the more sophisticated the sorter itself becomes.

Yes. One way of thinking about it is that I’m improving its eyesight. Computer scientists actually have learning algorithms where the computer is coding what I as a human being am doing, but you really need the human being in the loop to see the cultural dimension of it.

Right. Because it would be relatively easy to make up a list of words that would be considered out-and-out vulgar. But that’s less interesting than these other less obvious ones that are more a matter of art.

Absolutely. When people first think about a flame, what often first leaps to their mind are the so-called seven words you can’t say on TV. They think about censorship and regulation, but that’s just a tiny part of flaming and not really a central part of flaming behavior as I was studying it. This mismatch between our first impression about a flame and the actual behavior of flaming caused a lot more controversy than I thought when I first did it, because Eudora’s original interest in flame-detecting was to improve the language awareness of senders, who might be putting things in an email that, unbeknownst to them, could be read differently than what they had intended.

The peppers were originally designed to warn the writer that they had unintentionally written something that some recipient could take offense at.

And that if they were to send it that way, it would be because they at least understood the implication of what they were sending. Not to regulate their behavior but to ensure them, if they wished, a heightened awareness of what they were doing, so if they were relying on language that was abrupt, confrontational, or edgy, and knew this was the mood they were after, they could say, “Yeah, that’s exactly what I mean.” Or if that language was spilling over inadvertently into their email and was creating a mood or tone they did not want, they could say, “Well, you know, I really wasn’t aware that that was going to have that effect, and so I’m going to revise it and get the pepper out.” So it was really designed for the writer’s awareness and had nothing to do with public regulation, with 1984 Big Brother stuff.

And it doesn’t actually regulate them. It does not prevent you from sending them—it simply informs and in some cases delays.

It’s a mood rock. But as I’ve done my work in this coding up of strings and have built much larger English-based dictionaries using these sorts of string types, I did get into some issues I didn’t fully anticipate. I’ll give you an example of something sort of subtle. A law professor from another university was using Eudora and he happened to be trying to explain an author’s point of view in a book he was reviewing. So, in a long email that was mostly dry and scholarly, he threw in that the author takes a view that often “masquerades as” another kind of view. Now, the fact of the matter is that when you say, masquerade as, as opposed to a masquerade party, you’re implying that there is some deceptive intent; it’s pejorative. Academics use it all the time, just as they might say, “Someone is trying to smuggle assumptions in”—and when you say it in academic parlance, the negativity of smuggle is there but it gets drowned out by the words around it that don’t reinforce negativity. Nonetheless, in conventional English, that pejorative idea remains intact, so what happened is the professor finds he has earned a chili pepper for what he is imagining to be only dry, scholarly writing. He was wildly incensed by the pepper, thinking that the computer was advising him not to send that email. He thought it was a Patriot Act kind of thing. I have some sympathy with the professor’s point of view, because the chili pepper system needs to be sensitive to the extent to which flame-like language persists across an email. I’m quite sure the people at Eudora are sensitive to the problem and work to improve it. One thing to understand is that I had little to do with their method for calculating the number of chili peppers once flaming language is detected. They did that part.

That’s interesting to hear, because since we got involved with this idea we’ve been trying to write emails for fun to see how may peppers we get, and it’s not always clear how it works, even though the offending passage gets highlighted in the message.

Exactly. And for me, the highlighting was the key, not the number of chili peppers.

Because the highlighting allows the writer to see the potentially offending fragment and address it.

When I started coding up for them I tried to create some notion of degrees of flames. But I never systematized it.

But you did develop an additional dictionary for Eudora, the so-called H-Dictionary, which included certain words, mostly vulgarities, that would trigger a pepper even with a single appearance in a text.

Well, yes, I did want to say that there are some pretty nasty words and phrases that even if they’re there once, they’re probably going to be seen and focused on—no matter what the surrounding context. But there are also many words and phrases associated with flaming that cast suspicion on the email, but don’t on their own prove conclusive until you see enough of them. And I think that was something that was apparent to me in my research. And it was clear that suspicious words might earn one pepper, moving upward as more occurrences were found. And other patently offending words and phrases might earn an email multiple peppers on just one occurrence. But my research never involved itself in what the Eudora folks ultimately did—which was configure an algorithm for assigning one, two, or three peppers. To this day, I really don’t know anything about that algorithm.

You mentioned something about the Patriot Act and I was actually going to ask you about that, because this research was done in 2000, before 9/11, and before some of the issues around interpersonal and public rhetoric—what you can and can’t say—became quite as tricky as they are nowadays. Questions about what might constitute a politically subversive or threatening statement presumably weren’t so vivid in this calculus.

No, not at all. And that’s extremely context-sensitive. And, of course, the more context-sensitive the language is, the harder it is to simply isolate it by kernel strings. You’d have to have other more sophisticated contextual filtering for that.

Can you describe this idea of contextual filtering—how you read something based on what’s on either side of it?

Well, in fact Eudora may have implemented other algorithms that actually might monitor context in ways I don’t. But there are programs that can look at word frequency and position in the sentences and can calculate what the text is most about.

The “thrust” of something.

Yes, or they could do automatic summarization, which is very common in AI circles, to try to summarize what the text is about. And if the text has a certain thrust or summary then words take on a different meaning. So if you know a text is about dentistry, for example, the word drill would have a different connotation than if the text seems like it’s about oil refining. So you basically try to sort through larger global contextual issues as a way of selecting disambiguating markers for individual words or strings.

And presumably punctuation and syntax are also a part of this.

Absolutely. Take a word like mixed. If you say, “He mixed the salad,” that’s a motion. But if you use mixed as the last word of a sentence, before a period, the chances are much higher that it’s a statement of opinion. So with my string and dictionary work, I use punctuation markers a lot. For instance, a regular verb stem that starts a sentence is much more likely to be an imperative. Writers know these things and most of my professional career has, for the last ten years at least, been about simply trying to externalize things that writers know. When I was asked to do the flame detector as a summer job, I was approaching it from the point of view that it would be a very nice experiment to see how these strings work and support a particular system where the stakes are very low. And you know, I have to say that although I wrote a flame detector and to some people it looks regulatory and Big Brotherish after the fact, I do have serious ethical reservations about any machine that tries to evaluate a human communication. There’s a large research literature that shows how often systems get used for purposes they were never designed for. But when this idea was first on the books, it was really a sender-awareness tool more than a regulatory tool.

Do you use Eudora?

You know what? I don’t.

To download a PDF of Kaufer’s white paper, click here.

Jeffrey Kastner is a Brooklyn-based writer and senior editor of Cabinet.

David Kaufer is a professor and head of the English department at Carnegie Mellon University. For a look into his research in language function, out of which the work on flaming comes, see his latest book (written with Suguru Ishizaki, Brian Butler, and Jeff Collins), The Power of Words: Unveiling the Speaker and Writer’s Hidden Craft (Lawrence Erlbaum Associates). For more information, visit www.routledge.com. [Link updated on 10 November 2021—Eds.]