The Clean Room / Reading the Working Body after September 11

Marked by labor and leisure

David Serlin

“The Clean Room” is a column by David Serlin on science and technology.

In his performance piece Blood Is the Only Good Adhesive in Heaven (2001), Sxip Shirey assembles pavement fragments chiseled from sidewalks around New York City that are covered with the calcified, blackish-purple remains of chewing gum. Shirey explains that each remnant of gum we find on the street contains not only the genetic residue of its former owner but also that of dinosaurs, especially since most commercial chewing gums are manufactured using petroleum byproducts. In the act of gum chewing, Shirey says, the DNA encoded in our saliva intermingles with the remains of prehistoric beasts. The surfaces of the city, it seems, are covered in the collective DNA of dinosaurs as well as our friends, celebrities, anonymous flâneurs, and those long since departed, now stamped forever into cement exteriors, beneath school desks, and the underside of theater seats. To prove his point, the artist takes some gum collected from a sidewalk in Astoria, places it in his mouth, chews it methodically, and in a meditative trance narrates the life experience of its previous owner.

Shirey’s observation that personal histories are embedded into the physical surfaces of the external world is a poignant commentary about the complex relationship that New Yorkers—and, more broadly, people living in cities—have to their physical environment, and especially so after September 11. Without recourse to the elastic properties of gum, New Yorkers have found new ways to narrate their lives in the shadow of a massive building site, the perpetual excavation of which continues to determine the identities of the people who inhabit that landscape. In the anthropology of disaster, the physical evidence that we leave behind in our distress, perhaps even more than the stories that we tell each other, may be the only truly authentic register of our social identity. Unlike the gum-inspired narratives recovered by Shirey that were left behind voluntarily or even deliberately, it was the victims of the World Trade Center attacks themselves, and not their personal detritus, that was inscribed both literally and figuratively into the landscape of downtown Manhattan. Within a day or two after the attacks, the city was covered not only with ash and smoke but with posters describing in intimate detail the physical attributes of missing persons: hair and eye color, knowledge of birthmarks and tattoos, clothing last worn, and any other distinctive features. Rescue teams implored families to bring to Ground Zero items that might help in the process of identifying bodies and body parts: photographs and, later, personal items that might contain DNA, such as swatches of fabric, combs, and hairbrushes. Under such dire circumstances, many believe that a DNA match might be the only logical method by which to differentiate one victim from another. Experts claim that DNA testing matches more closely to an individual than virtually any other scientific method of identification, such as fingerprinting.

Identification methods that rely on evidence taken from the material body, like DNA, have complex histories, many of which lack the humanitarian dimension exhibited at Ground Zero. The well-known systems of criminal identification developed by 19th-century European social scientists like Alphonse Bertillon, Francis Galton, and Cesare Lombroso were ideological forerunners of the genetic technologies used today. They represent the earliest taxonomies for identifying and classifying criminals by measuring their faces, limbs, and other body parts. On wall charts designed for massive lecture halls, as well as on posters prominently posted in police bureaus, Bertillon, Galton, and Lombroso displayed systematic comparisons of physical features fed by descriptions of ethnic “types” and assumptions about “inferior” family lines that produced unhealthy bodies and corrupt societies. Not everyone passively endorsed these systems of identification. In 1881, for example, when Edouard Dégas first exhibited his now-revered sculpture Little Dancer Aged Fourteen, his supporters and critics were appalled because Dégas placed the sculpture in a gallery space surrounded by police portraits of criminal physiognomies. By comparing the pronounced physical features of the ballerina with the offensive portraiture, Dégas was able to neutralize the claims that Bertillon and his followers made on behalf of scientific authority. In effect, Little Dancer Aged Fourteen was a deliberate intervention into what some thought was a closed debate about the power of science to police criminal types and to discourage reproduction between social inferiors.[1]

When the Bertillon system for categorizing criminals was introduced to law enforcement officers in the United States in the late 19th century, the federal government believed that the system placed too much emphasis on facial features and other external characteristics (especially ones that could be altered through accident, disease and, later, the techniques of plastic surgery) and instead adopted Galton’s new, and controversial, method of fingerprinting. As Simon Cole has described, even the so-called scientific objectivity of the fingerprint could be used to maintain the dominant racial and gendered hierarchies of the early 20th century.[2] For xenophobic Americans during the Progressive Era, the standardization of fingerprinting imputed to immigration a criminal dimension: Chinese railroad workers, for example, were classified by their thumbprint, the very same system used to keep track of female prostitutes. No matter what their occupation, men and women from non-Western countries, especially Asia, seeking to become naturalized citizens were prevented from doing so until 1952.[3]

Like fingerprinting, DNA testing has emerged with its own conspicuous set of scientific and philosophical problems. The purported authority and objectivity of DNA-based evidence makes it possible for many criminal justice prosecutors to maintain standard racist or xenophobic policies against people who fit certain “genetic profiles.” Law professor and George W. Bush appointee John J. DiIulio, Jr., for instance, sees poor, mostly black, inner cities as genetic incubators for a new generation of criminals he has designated as “superpredators.” The potential to abuse DNA testing continues to remind many concerned with civil liberties of the unhappy marriage of applied genetics and public policy that emerged at the height of Galton’s eugenics movement, the legacy of which was resurrected and endorsed by Charles Murray and Richard Herrnstein in their controversial 1994 book The Bell Curve. Furthermore, too much reliance on DNA’s authority defines the pronounced lack of communication and critical thinking between the growth of genetic knowledge in the biomedical sciences and the application of genetic knowledge in the social sciences, which is why concerned scientists and activists insisted that the federal government implement a bioethics committee as part of the National Institute of Health’s multibillion-dollar Human Genome Project.

Since September 11, the contemporary emphasis placed on DNA testing has underlined, if nothing else, the virtual invisibility of physical markings to designate one’s personal history or social status. Traditionally, working with your body was the primary method by which citizens forged professional identities in large northeastern industrial cities like New York, and such work left distinctive traits that were emblematic of one’s relationship to labor. According to Joshua Freeman’s recent book Working-Class New York, New York’s identity as an industrial center defined the lives and bodies of its residents for the greater part of the 20th century. Freeman reclaims the city’s past in the postwar era as a kind of utopian (some even claimed socialist, especially during Harry S. Truman’s presidency) network of shipyards, construction sites, breweries, refineries, and both small- and large-scale manufacturing districts with soaring union memberships and labor-oriented social clubs.[4] The area that became later known as the World Trade Center was originally an extended Irish, Jewish, and Arab enclave of restaurants and tenement buildings, wholesale food markets, coffee roasters, and warehouse storage. The building of the World Trade Center, as Robert Fitch has explained in The Assassination of New York, destroyed this neighborhood and became symbolic of downtown planners’ stalwart commitment to white-collar industries of finance, insurance, and real estate.[5] Since the 1970s, the privileging of a service economy in the United States—one defined by large multinational corporations and not by small local businesses—has dissolved the connection between doing manual labor and earning a living for a large proportion of Americans.

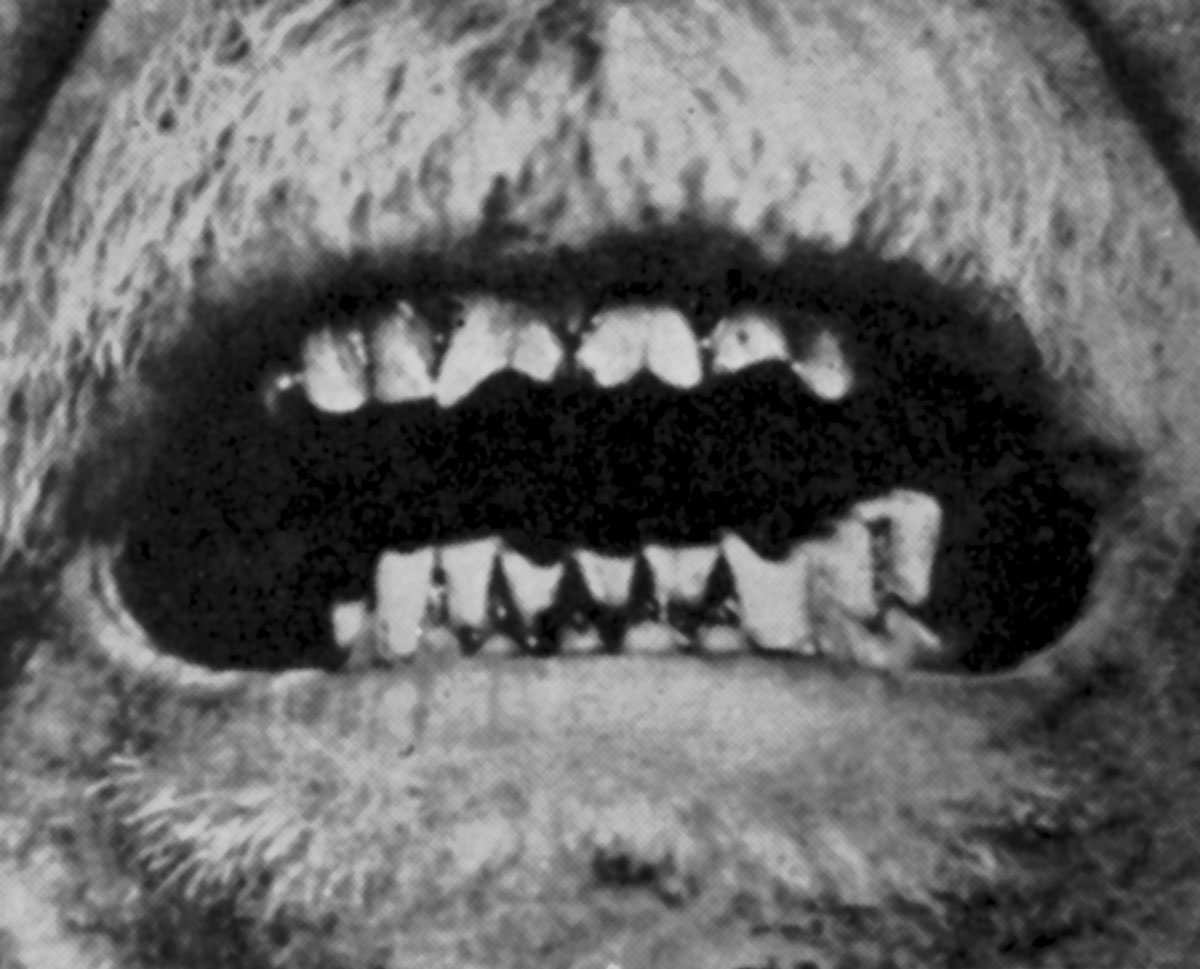

In 1948, Francesco Ronchese, the chief dermatologist at Rhode Island Hospital in Providence, published Occupational Marks and Other Physical Signs, a fascinating medical reference book produced in the first moments of the transition from an industrial to a service economy.[6] Ronchese believed that the examination process—which covered everything from regular check-ups to autopsies—was a golden opportunity for physicians and forensic scientists to learn how to read and interpret the markings on the bodies of patients in relation to their social value. Providence, a flourishing northeastern city that did not witness its own industrial decline and subsequent impoverishment until the 1970s, provided Ronchese with an unlimited palette of workers who represented a vast range of industrial, manufacturing, and service occupations that relied primarily on physical labor. Through long verbal descriptions, x-rays, and illustrations, Ronchese developed intimate categories for showing how the signs of occupation—as well as occupational hazards—were manifest physically on the human body. He catalogued the ways in which carpenter’s lips were indented with vertical grooves from holding nails; secretaries’ buttocks were callused from sitting in uncomfortable office chairs; and how florists’ arms exhibited permanent scars from pruning and arranging flowers. For those of us in 2002 who are nostalgic for manual labor, Ronchese shows a modern industrial landscape of productive but exhausted streetcar conductors, elevator operators, ticket distributors, office clerks, ice deliverers, laundry washers, and ice cream makers covered in starched uniforms and greasy overalls, wearing velvet gloves and sensible shoes, using ice tongs and hard soap, and operating and repairing machinery. Certain subgenres of occupational marks, such as the earnest though scarred arms of women welders, emerged historically from the rise of female labor during World War II in the era of “Rosie the Riveter.” Ronchese also included marks that designated other occupations, many of which were uncommon even in a city like Providence. Nuns were clearly represented by a lifetime of aggravated kneecaps and elbows and indentations on the forehead from the vagaries of wimples and veils. Italian and Spanish winemakers tended toward “grape pedipulation,” that is, the appearance of violet stains on the distorted soles of the feet from stomping on grapes in oak barrels.

In the late 1940s and early 1950s, when normalization and socialization were components of both social science and public policy, the physical status of the body was the telltale sign of one’s social identity. In 1955, for example, Betty Lou Ruble of Pan American Airlines was presented with an award for the “Stewardess Who Has Traveled the Longest Distance in a Week Serving Drinks”; her accomplishment was measured with the help of a pedometer strapped to her leg. In the celebrated service sector economy, especially as personified by the sexy stewardess in the modern age of Jet Set travel, what could be more symbolic of gender progress than the contemporary professional woman in a Pucci ensemble serving a randy businessman his perfunctory G&T? Yet despite the glamour associated with jet travel, Ruble’s sore muscles and feet—characteristic of the age-old servility of women—was probably the only palpable evidence of her career outside of traces of eye shadow, Beefeater stains on her fingers, and the possible lures of plastic surgery.[7]

Baby boomers in the United States—the self-congratulatory members of the “Greatest Generation”—may be the first in modern history whose bodies are unmarked by traditional signs of physical labor. As Slavoj Zîzek has quipped, only in James Bond films—and especially during those action scenes set in underground laboratories in secret mountain hideouts or volcanic craters—do Westerners catch a glimpse of live workers actually engaged in the production process.[8] No matter how much a modern Nautilus machine may resemble a steam-powered loom, a gym-toned body that is unnaturally disproportionate to one’s professional identity does not reflect a relationship to physical labor except for a complete lack of it. This may explain why since September 11 the importance of physical labor has given a new dignity to firefighters, paramedics, rescuers, and volunteers. In light of the recession and the collapse of the dot.com economy, it is no wonder that firemen have replaced financial elites as the men of the hour. Since New York, like much of the United States, has moved a large proportion of manufacturing and industrial production to other parts of the world, the Dickensian descriptions of workplace maladies and physical ailments that accompanied the Industrial Revolution are now seen in predominantly (and, in many cases, almost exclusively) non-Western countries. But while the service and information economy relies on mental labor, it has also produced a different set of health problems than those suffered by our ancestors, including fatigue and attention disorders, carpal tunnel syndrome, and anxiety- and stress-related issues.

Now that we have attributed so much meaning, and conferred so much credibility, to DNA as the genetic fingerprint of one’s physical sovereignty, DNA has become the singular (if reductive) substitute for identity in the absence of other discernible physical markings. Despite its appeals to scientific precision at the molecular level, DNA testing only emphasizes an accretion of genetic detail, which outlines the contours of one’s molecular identity but fails to capture much else. Indeed, genetic variability between members of the same species—characterized as somewhere between three and five percent—is so infinitesimally small and so potentially negligible that for the past four decades scientists have been trying to revise standard social designations such as “race” (and, more controversially, “gender”) to challenge some of the divisive assumptions at the heart of human population genetics. The prospect of genetic differences that actually do divide us from other members of our species, however, is even more confounding. Joshua Lederberg, who shared the 1958 Nobel Prize in Physiology or Medicine for his work on the sexual reproduction of bacteria, claims that of the one hundred trillion—100,000,000,000,000—cells that make up the typical human body, over ninety percent of these cells are bacteria. This means that the boundaries that distinguish us from others– either from members of our own species or from organisms at the microscopic level—are not only highly permeable and even elusive, but may not even exist.

Biomedical predictions of the potential uses of DNA-based identification sciences give us pause to consider not only how future societies will scrutinize our bodies but also how we will identify ourselves and each other. What within our own bodies, ultimately, is uniquely identifiable as belonging exclusively to our own selves? What is it, exactly, that constitutes the “us” in the physical spaces that we occupy? Will experimental gene therapies, embryonic stem cell interventions, and genetic enhancement technologies neutralize our genetic fingerprint—and if so, will it even matter? Future generations may use information gleaned from DNA as a prerequisite for long-range career planning, but for the time being we can only expect it to indicate to what family lines we belong, or to what diseases we may be predisposed. After our death, our DNA tells us nothing about who we actually were or what kind of work we performed during our lifetime.

- Martin Kemp and Marina Wallace, Spectacular Bodies: The Art and Science of the Human Body from Leonardo to Now (Berkeley: University of California Press, 2000), pp. 140–143.

- See Simon Cole, Suspect Identities: A History of Fingerprinting and Criminal Identification (Cambridge, MA: Harvard University Press, 2001).

- See Ian F. Haney López, White by Law: The Legal Construction of Race (New York: New York University Press, 1996), pp. 42-44.

- See Joshua Freeman, Working-Class New York: Life and Labor since World War II (New York: New Press, 2000).

- See Robert Fitch, The Assassination of New York (New York: Verso, 1994).

- All references to Francesco Ronchese, Occupational Marks and Other Physical Signs: A Guide to Personal Identification (New York: Grune and Stratton, 1948).

- Keith Lovegrove, Airline: Identity, Design, and Culture (London: Laurence King, 2000), p. 24.

- See Slavoj Zizek’s widely circulated article in response to the 11 September 2001 attacks at web.mit.edu/cms/reconstructions/interpretations [link defunct—Eds.].

David Serlin is an editor and columnist for Cabinet. He is the co-editor of Artificial Parts, Practical Lives: Modern Histories of Prosthetics (New York University Press, 2002).

Spotted an error? Email us at corrections at cabinetmagazine dot org.

If you’ve enjoyed the free articles that we offer on our site, please consider subscribing to our nonprofit magazine. You get twelve online issues and unlimited access to all our archives.